Abstract

In manufacturing, assembly tasks have been a challenge for learning algorithms due to variant dynamics of different environments. Reinforcement learning (RL) is a promising framework to automatically learn these tasks, yet it is still not easy to apply a learned policy or skill, that is the ability of solving a task, to a similar environment even if the deployment condition is only slightly different. In this paper, we address the challenge of transferring knowledge within a family of similar tasks by leveraging multiple skill priors.

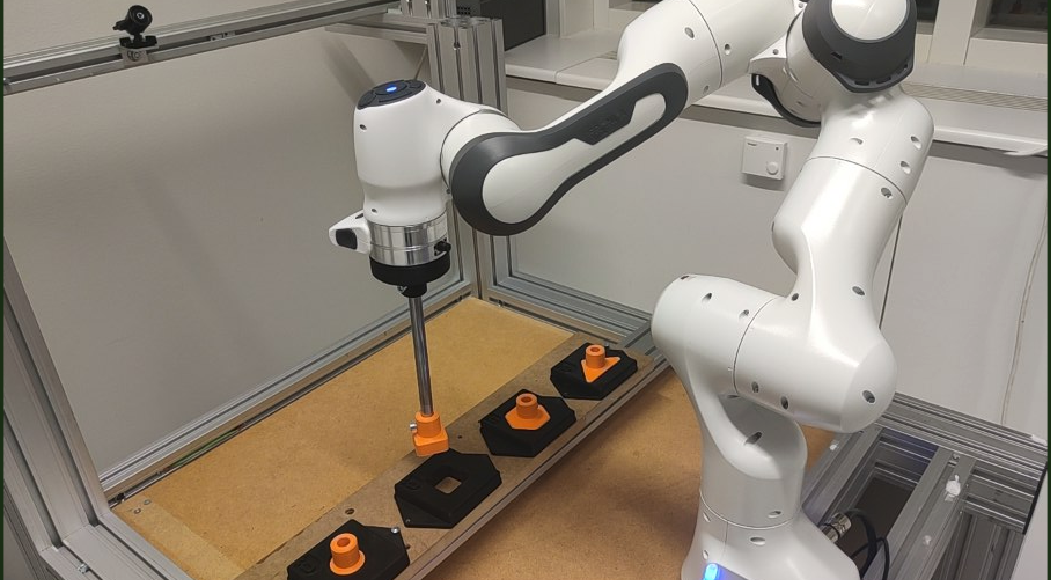

We propose to learn prior distribution over the specific skill required to accomplish each task and compose the family of skill priors to guide learning the policy for a new task by comparing the similarity between the target task and the prior ones. Our method learns a latent action space representing the skill embedding from demonstrated trajectories for each prior task. We have evaluated our method on a task in simulation and a set of peg-in-hole insertion tasks and demonstrate better generalization to new tasks that have never been encountered during training. Our Multi-Prior Regularized RL (MPR-RL) method is deployed directly on a real world Franka Panda arm, requiring only a set of demonstration trajectories from similar, but crucially not identical, problem instances.

Full paper here: [PDF]